By James Case

For Freeman Dyson, a lifelong interest in Darwinian evolution has led to “a new career as a self-proclaimed expert in the theory of games” [2]. William Press, a computer scientist and computational biologist at the University of Texas, Austin, set the story in motion with an e-mail message to Dyson. Having identified a previously unnoticed class of strategies for playing the game of iterated prisoner’s dilemma, Press was surprised to see his simulations crash when pitting one such strategy against another. Could Dyson explain why this was happening?

Press is an experimentalist concerned mainly with computer programs. Dyson, a theoretician more comfortable with equations, soon identified a determinant that, when equal to zero, permits the singular behavior Press had observed. Their joint paper on the subject [3] has caused a stir in the world of theoretical biology.

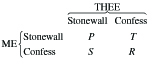

Prisoner’s dilemma is a deceptively simple, symmetric non-zero-sum binary decision game. In the iconic version, two thieves are apprehended in the vicinity of a crime scene, in possession of stolen goods. They will be interrogated separately by police. Should neither confess, both will be found guilty of some minor offense. If either rats on the other, however, the silent party will receive a harsh sentence while the betrayer walks free. Finally, if each rats on the other, both will serve intermediate sentences, longer than if both had remained silent but substantially shorter than the one that would be imposed on a silent victim of betrayal. The numerical payoff scheme for players ME and THEE is shown in the following matrix, where \(S < P < R < T\).

|

Many authors use \((S, P, R, T) = (0,1,3,5)\) for illustrative purposes, and assume that \(2R > S + T\) for technical reasons. It is sometimes useful to identify the foregoing “payoff matrix” and its transpose with the 4-vectors \({\boldsymbol{\Pi}} = (P,T,S,R)\) and \(\boldsymbol{\Pi^{\prime}} = (P,S,T,R)\).

Iterated prisoner’s dilemma, in which the same two players play many rounds of PD (enabling each to predicate his own decisions on the other’s past behavior), has become an important model in biology, primatology, and various social sciences for situations in which each of two players must decide repeatedly whether to cooperate in the upcoming round of play. In the generalized version of IPD, “stonewall” exemplifies “cooperation” (c); “confess” represents the binary alternative (d), “don’t cooperate.” A glance at the reward matrix reveals that c is the riskier—but potentially more rewarding—of the two choices. The fact that no two entries in the reward matrix are identical means that ME can tell after each round of PD exactly what THEE did and (if desired) maintain a complete record of both players’ decision histories. Perhaps the most surprising fact to emerge from Dyson’s analysis—presented in Appendix A of [3]—is that there is no certain advantage in so doing. Neither party has anything to gain by possessing a longer memory than the other!

For this and other reasons, it makes sense to focus initially on the version of IPD in which neither player cares to remember anything more of the past than the outcome of the latest round. Because the possible rewards \({S,P,R,T}\) in PD correspond to the ME\THEE decision pairs {d\c, c\c, d\d, c\d} = {1,2,3,4}, a stationary mixed strategy for ME consists of a 4-vector \(\boldsymbol{p} = (p_{1},p_{2},p_{3},p_{4})\) in which \(p_i\) represents the probability that ME elects to cooperate (c) in the next round given that \(i \in \{1,2,3,4\}\) was the pair most recently chosen. Similarly, a stationary mixed strategy for THEE consists of a 4-vector \(\boldsymbol{q} = (q_{1},q_{2},q_{3},q_{4})\) in which \(q_i\) represents the probability with which THEE elects to cooperate (c) in the coming round when acting on the same information.

For a pair \((\boldsymbol{{p,q}})\) of stationary mixed strategies, one can form the \(4 \times 4\) Markov matrix \(\boldsymbol{M}(\boldsymbol{{p,q}})\) governing round-to-round transitions among elements of {1,2,3,4}. If \(\boldsymbol{{M^{\prime}}} = \boldsymbol{M} – \boldsymbol{I}\), the stationary vector \(\boldsymbol{v}\) of the Markov process then satisfies both \(\boldsymbol{{v^TM}} = \boldsymbol{{v^T}}\) and \(\boldsymbol{{v^TM^{\prime}}} = \boldsymbol{0}\). From these Dyson concludes that the dot product \(\boldsymbol{v} \cdot \boldsymbol{f}\) of the stationary vector \(\boldsymbol{v}\) with an arbitrary 4-vector \(\boldsymbol{f}\) can be expressed as a \(4 \times 4\) determinant \(\boldsymbol{{v \cdot f}} = D(\boldsymbol{{p,q,f}})\) in which the second and third columns are the respective transposes of \(\boldsymbol{\tilde{p}} = (–1 + p_{1},–1 + p_{2},p_{3},p_{4})\) and \(\boldsymbol{\tilde{q}} = (–1 + q_{1},–1 + q_{2},q_{3},q_{4})\), and the fourth column is \(\boldsymbol{f}\) itself. Clearly, \(\boldsymbol{\tilde{p}}\) is not influenced by THEE, and \(\boldsymbol{\tilde{q}}\) is not influenced by ME. The steady-state payoffs to ME and THEE are \(\pi_{ME} = \boldsymbol{{v \cdot \Pi/v \cdot} 1} = D(\boldsymbol{{p,q,\Pi}})/D(\boldsymbol{{p,q,}1})\) and \(\pi_{THEE} = \boldsymbol{{v \cdot \Pi}}/\boldsymbol{{v \cdot} 1} = D(\boldsymbol{{p,q,\Pi^{\prime}}})/D(\boldsymbol{{p,q,}1})\), where \(\boldsymbol{1}\) denotes a vector of ones. Thus, for any real constants \(\alpha,\beta,\gamma\),

\[\begin{equation}

\alpha \pi_{ME} + \beta \pi_{THEE} + \gamma =\\

\\

\frac{D(\boldsymbol

{{p,q}},\alpha \boldsymbol{{\Pi}}+\beta\boldsymbol{{\Pi^{\prime}}}+\gamma\boldsymbol{1})}{D(\boldsymbol{{p,q,1}})}.

\end{equation}\]

This is Dyson’s key equation. Plainly, it affords ME (resp. THEE) the unilateral power to choose—simply by requiring that \(\boldsymbol{{\tilde{p}}}\) (resp. \(\boldsymbol{{\tilde{q}}}\)) be a scalar multiple of the fourth column \(\boldsymbol{f} = \alpha \boldsymbol{{\Pi}} + \beta{\boldsymbol{{\Pi^{\prime}}}} + \gamma{\boldsymbol{1}}\) of the determinant in the numerator—a strategy \(\boldsymbol{p}\) (resp. \(\boldsymbol{q}\)) that causes \(D(\cdot,\cdot,\cdot)\) to vanish, along with the linear combination \(\alpha\pi_{ME} + \beta \pi_{THEE} + \gamma\). Dyson and Press call such strategies “zero-determinant” strategies, and report that although they exist for all iterated non-zero-sum binary decision games, the existing literature appears to make no mention of them.

It turns out that, although ME (resp. THEE) can choose a ZD strategy \(\boldsymbol{p}\) (resp. \(\boldsymbol{q}\)) that causes \(\pi_{THEE}\) (resp. \(\pi_{ME}\)) to assume any desired value in the interval \([P,R]\), he is unable to exert similar control over his own steady-state reward \(\pi_{ME}\) (resp. \(\pi_{THEE}\)). Perhaps more interestingly, for any \(\chi > 1\), ME can choose \(\boldsymbol{p}\) to guarantee that \(\pi_{ME} = \chi \cdot \pi_{THEE}\). Press calls such \(\boldsymbol{p}\) “extortion strategies,” and Dyson finds them to exist for both players and for any value of the “extortion factor” \(\chi\). Difficulties naturally arise when both players attempt to claim a lion’s share of the available rewards.

In an evolutionary setting, attempts at extortion could result in the stronger of two competing species driving, or attempting to drive, the weaker from a previously shared environment. In an economic milieu, it could correspond to a “price war” in which each of two competing firms sets out to drive the other from a market they alone serve. In practice, such campaigns rarely last long, terminating when it becomes obvious to both parties that the price of victory is sure to exceed its possible worth. Thereafter, the situation typically reverts to something resembling the status quo ante. On the rare occasions when price aggression does succeed, it tends to be because the victor began with a larger “war chest” than the vanquished.

The Press–Dyson results contradict recent thinking about IPD, much of which emerged from two round-robin computer tournaments organized [1] by Robert Axelrod in the late 1970s. In both, the most successful strategies were those seeking to establish cooperation rather than dominance. As a result, most game theorists concluded that dominance is rarely more than an unrealizable pipedream.

Press and Dyson strengthen their argument for the efficacy of extortion strategies by demonstrating—in Appendix B of [3]—that they perform as advertised against non-stationary as well as stationary opposing strategies. This leads them to the decidedly implausible conclusion that, unless able to read an opponent’s mind, players of IPD can do no better than accept whatever minuscule reward is offered by the executor of an extortion strategy. Price wars, into which both parties enter on the theory that “THEE is less well equipped to prevail than ME,” would seem to offer a counter-example. In most such contests, both theories are quickly disproven, and both combatants emerge gratified that they refused the pittance initially offered them. One wonders what feature of the Press–Dyson model enables extortion strategies to perform so well.

Press and Dyson express greater interest in Darwinian evolution than in extended economic competition, despite abstract results concerning IPD that seem equally relevant to both. Dyson, in particular, seems anxious [2] to challenge the currently “fashionable dogma” that individual selection is the driving force behind evolution. Group selection, in which groups either enforce or fail to enforce rules requiring within-group cooperation, is in his view at least as important. Dyson writes that he does not “take the Prisoner’s Dilemma seriously as a model of the evolution of cooperation,” because he suspects that groups unable to enforce cooperation lose “the battle for survival collectively, rather than individually.” Human beings have evolved a limited ability to cooperate by learning to punish non-cooperators. In Dyson’s opinion, “The Prisoner’s Dilemma didn’t have much to do with it.”

References

[1] R. Axelrod, The Evolution of Cooperation, Basic Books, New York, 1984.

[2] F.J. Dyson, The prisoner’s dilemma, IAS Inst. Lett., Fall 2012.

[3] W.H. Press and F.J. Dyson, Iterated prisoner’s dilemma contains strategies that dominate any evolutionary opponent, Proc. Natl. Acad. Sci. USA, 109:26 (2012), 10409–10413.

James Case writes from Baltimore, Maryland.